AI Agents Explained: The Next Evolution in Generative AI

Explore the evolution of generative AI into agentic frameworks, bridging the gap between structured workflows and fully autonomous systems. Learn how AI agents are transforming automation, decision-making, and innovation in the future of work.

Excerpt

Generative AI (GenAI) agents are everywhere this year, with many calling it the “year of agents”, but what does that actually mean? The term might evoke sci-fi visions of the Terminator, but today’s AI agents are far from a dystopian Skynet future. Instead, it represents the next major advancement in how we work, through automating tasks, decision-making, and dynamically adapting to complex problems. We have already seen GenAI buzz evolve from foundational Large Language Models (LLMs) to Retrieval-Augmented Generation (RAG), enhancing outputs with real-world data. Now the focus shifts to AI agents, a step closer to AI that not only responds to prompts, but also actively assists, plans, and executes on our behalf.

What is an Agent?

Agents are intelligent systems designed to perform tasks in an automated, adaptive, and independent manner. Given the rapidly evolving nature of this technology, defining an “agent” precisely is challenging. Rather than adding another definition, this article aligns with the categorization used by Anthropic, a leading generative AI company. The sections that follow explore commonly referenced “agentic” frameworks and highlight the key components that differentiate AI agents from other approaches.

Architecture

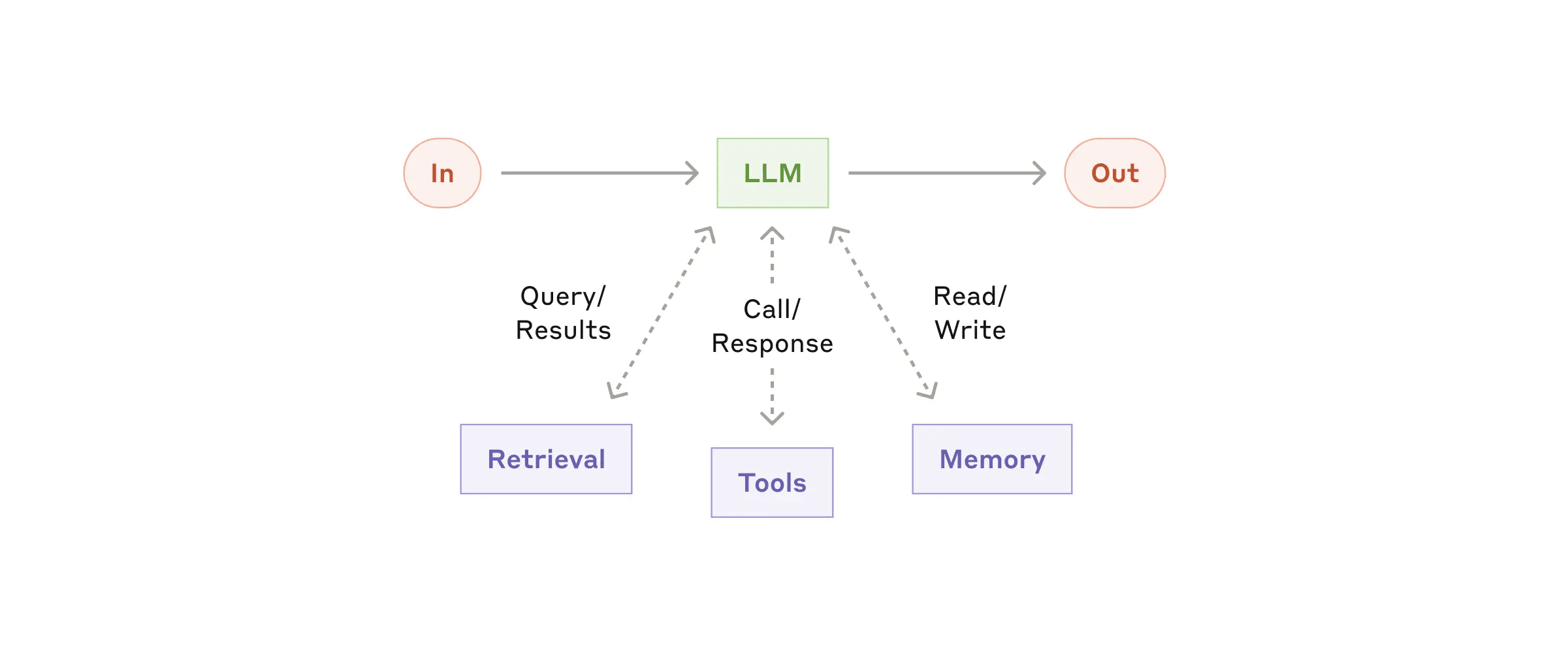

Before diving into the components, it is helpful to understand the general architecture of an AI agent and how it compares to existing technologies. At its core, it consists of:

- A foundational LLM model trained on a set or multiple sets of data

- Memory to store and recall past interactions

- Entitlements that define its permissions and access

- Tools that perform tasks

- Reasoning to dynamically access situations and make decisions

This architecture helps AI agents handle tasks autonomously across various applications like AI assistants, chat services, and autonomous vehicles. Unlike traditional automation systems based on rigid workflow execution and decision trees, agents bring adaptability, reasoning, and continuous updating to reasoning.

A useful comparison is to think of agents as an evolution to automation platforms like Zapier or IFTTT. These platforms let users create workflows with predefined steps that execute consistently. However, they are limited in their ability to reason, adapt, or solve problems dynamically. Agents enrich these linear workflows with a 24/7 intelligent operator, who follows instructions, but also adjusts, learns, and refines workflows in response to new variables, requests, and expectations.

AI Agent Components

With the architecture covered, let's take a deeper look at the core and additional components that make them function. AI agents will vary in complexity based on purpose, but they all rely on these building blocks to process inputs, reason, action, and improve over time.

- Input - This is the starting point of the agent process. In simple agents, this input will come from user prompts that trigger the reasoning process in the LLM. In fully autonomous agents, the input can come from a scheduled trigger, real-time event, or state change that happens without any human interaction.

- LLM - The Large Language Model is the core engine of every agent, responsible for understanding input, reasoning, and generating responses from the various functions of the agent.

- Memory - Creates continuity by storing and recalling past interactions, decisions, feedback, and data. This prevents process overload while ensuring the agent refines its reasoning based on a finite selection of relevant information, rather than treating each task as an isolated first-timer interaction.

- Tools - Functions, APIs, and other services that allow the agent to fetch information and perform tasks beyond its trained knowledge. An example is an API call to a customer support CRM to retrieve a user’s information, enabling the agent to personalize responses and take appropriate action. Another is a web scraping or data retrieval tool that allows the agent to pull real-time market data, helping it make informed recommendations or automate reporting.

- Retrieval - The process of querying external databases and vector stores to supplement the agent’s reasoning.

- Output - The agent’s final action can come in many forms like a text response, API call, database update, or other task-like execution.

Agentic Systems

Agentic systems can take different forms with the key differentiator being the level of autonomy that is put in the hands of the human or model. On one end of the autonomy scale, you have the workflows, which are human-maintained, structured predefined processes. On the other end, fully autonomous agents dynamically make decisions with minimal human intervention.

Workflow Systems

Workflows represent structured systems where tasks are divided into discrete steps. This design creates control and brings transparency to how the process operates from start to finish. The following are common approaches to workflow architecture.

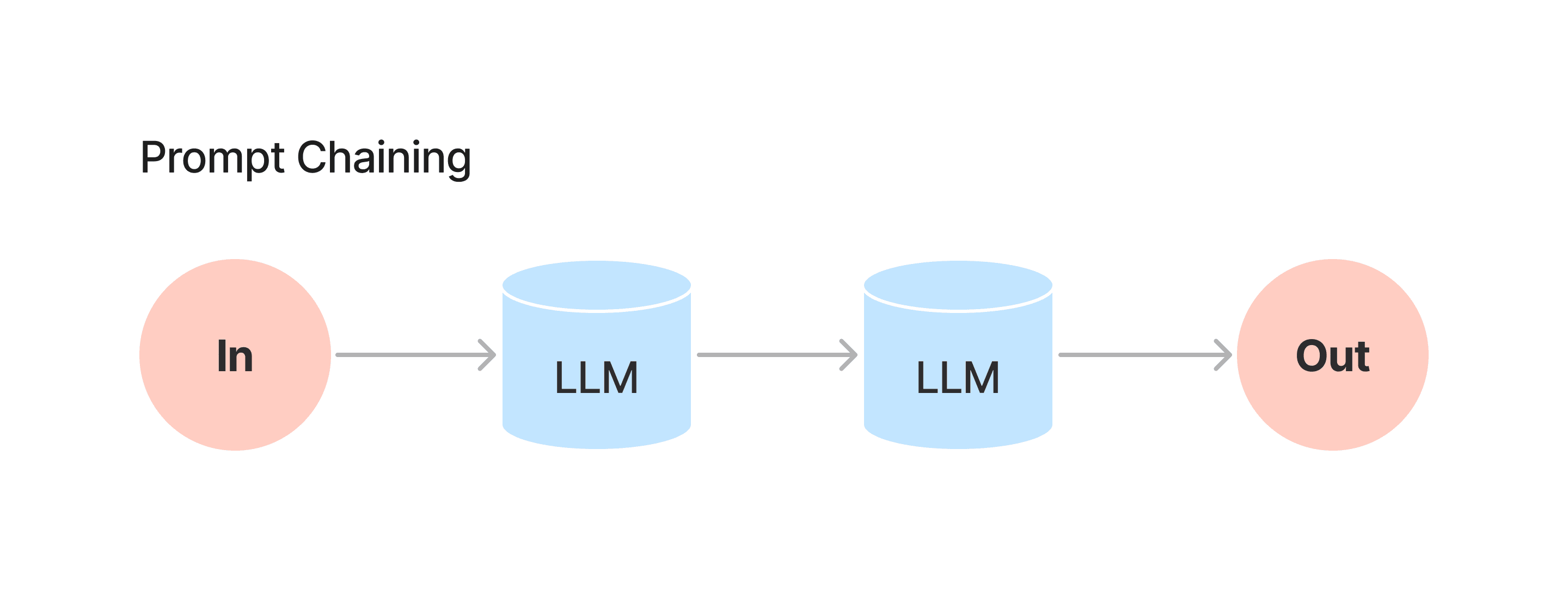

- Prompt Chaining - A workflow that breaks down a complex task into multiple LLM interactions, improving precision in its output than a single prompt could achieve. It gives you more control and transparency over each step in the reasoning process.

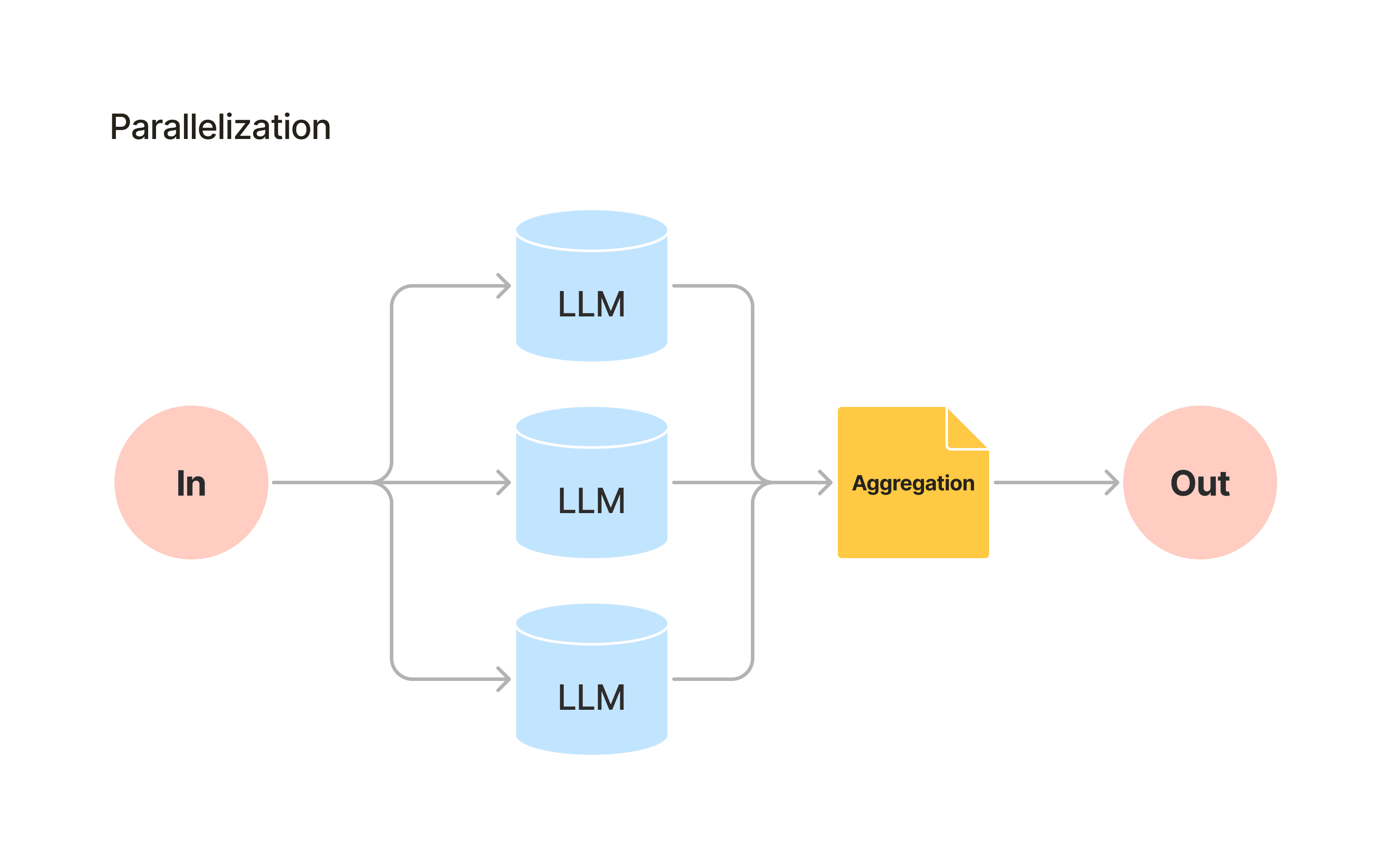

- Parallelization - A workflow that runs multiple LLMs simultaneously and aggregates the responses into a single output to improve efficiency and accuracy.

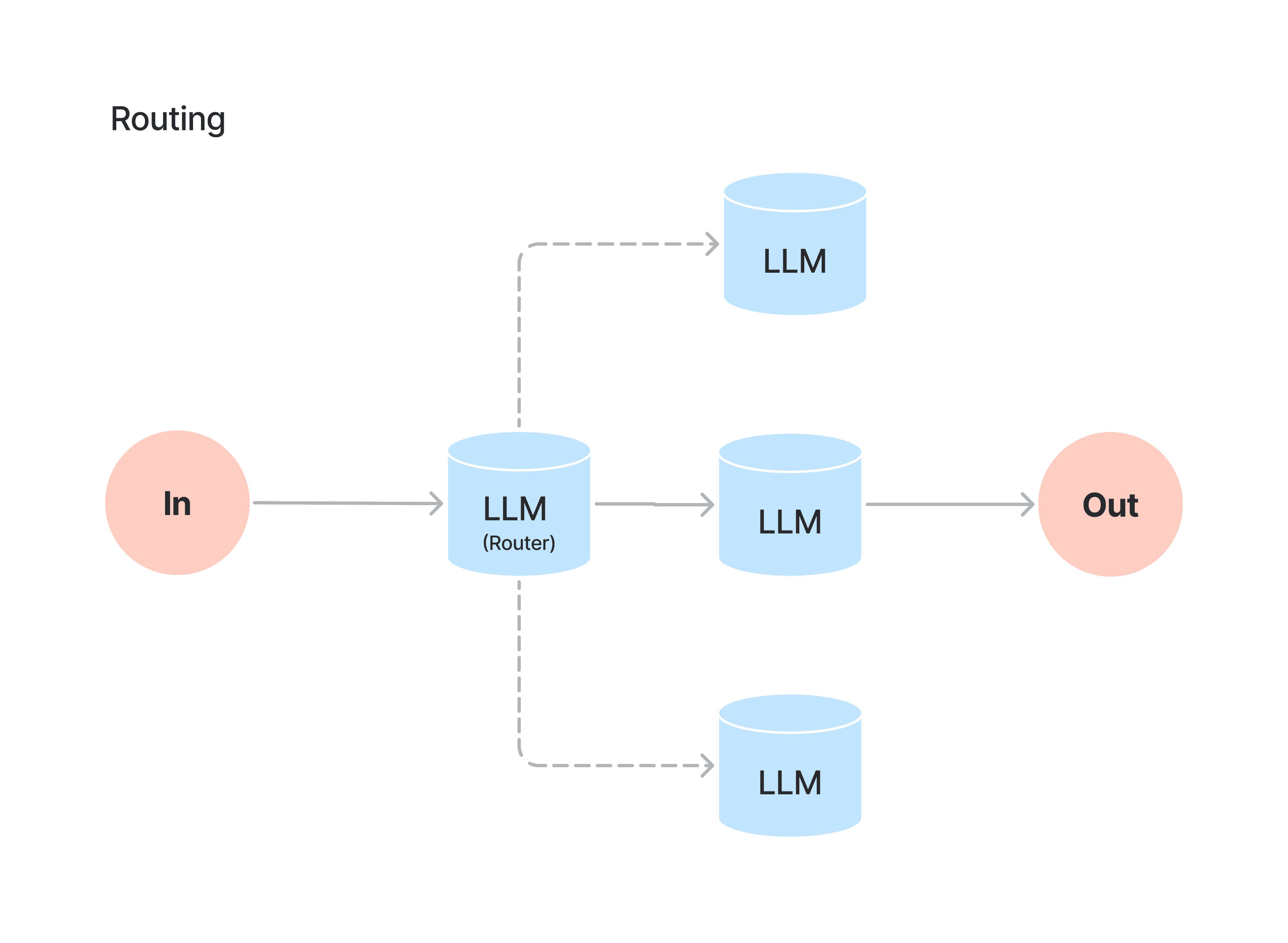

- Routing - A workflow where a router LLM determines which function or process should be performed based on its interpretation of the task.

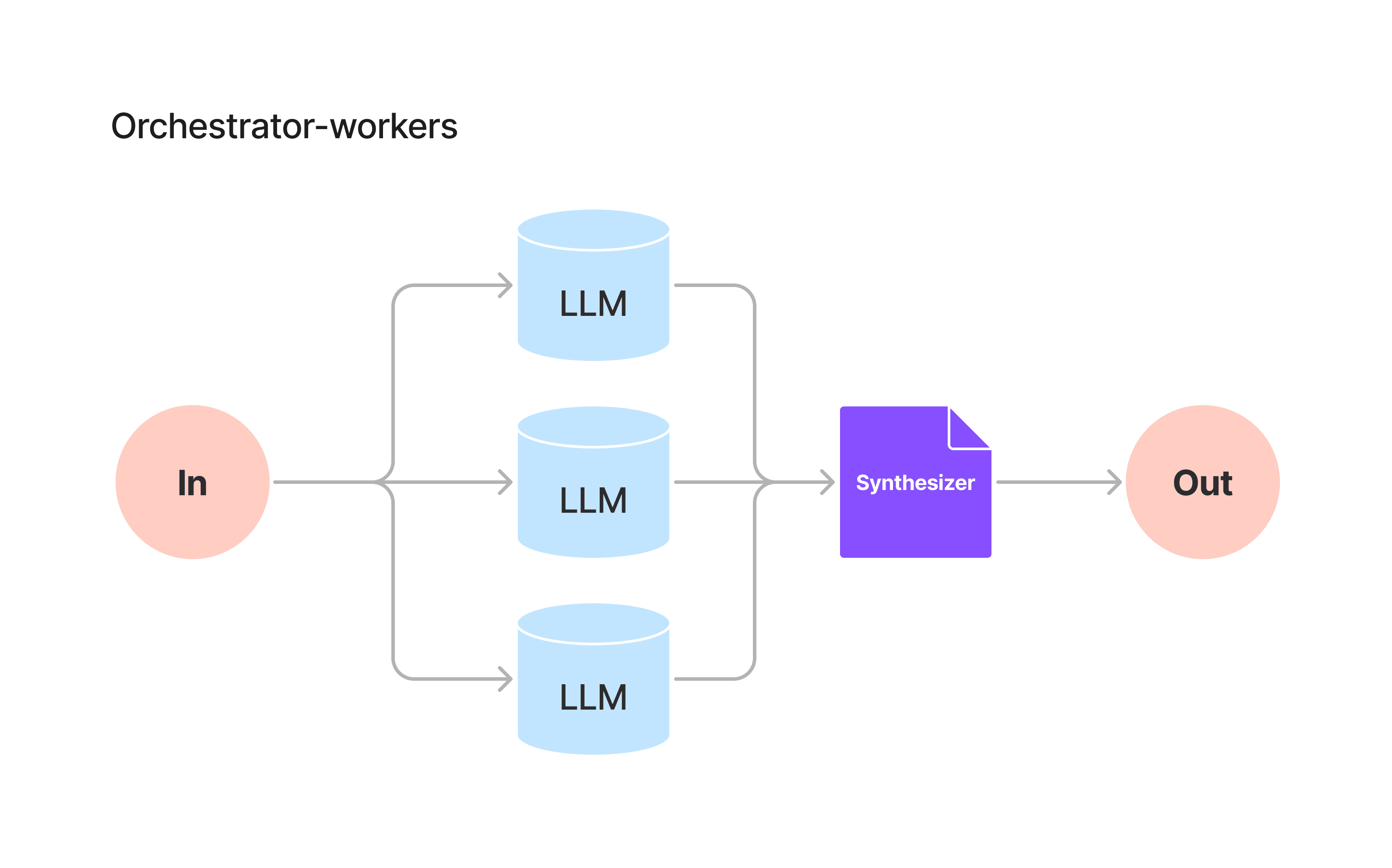

- Orchestrator-Worker - A workflow that contains an orchestrator LLM that decomposes a task and delegates it to specialized worker LLMs before the results are then synthesized into a response.

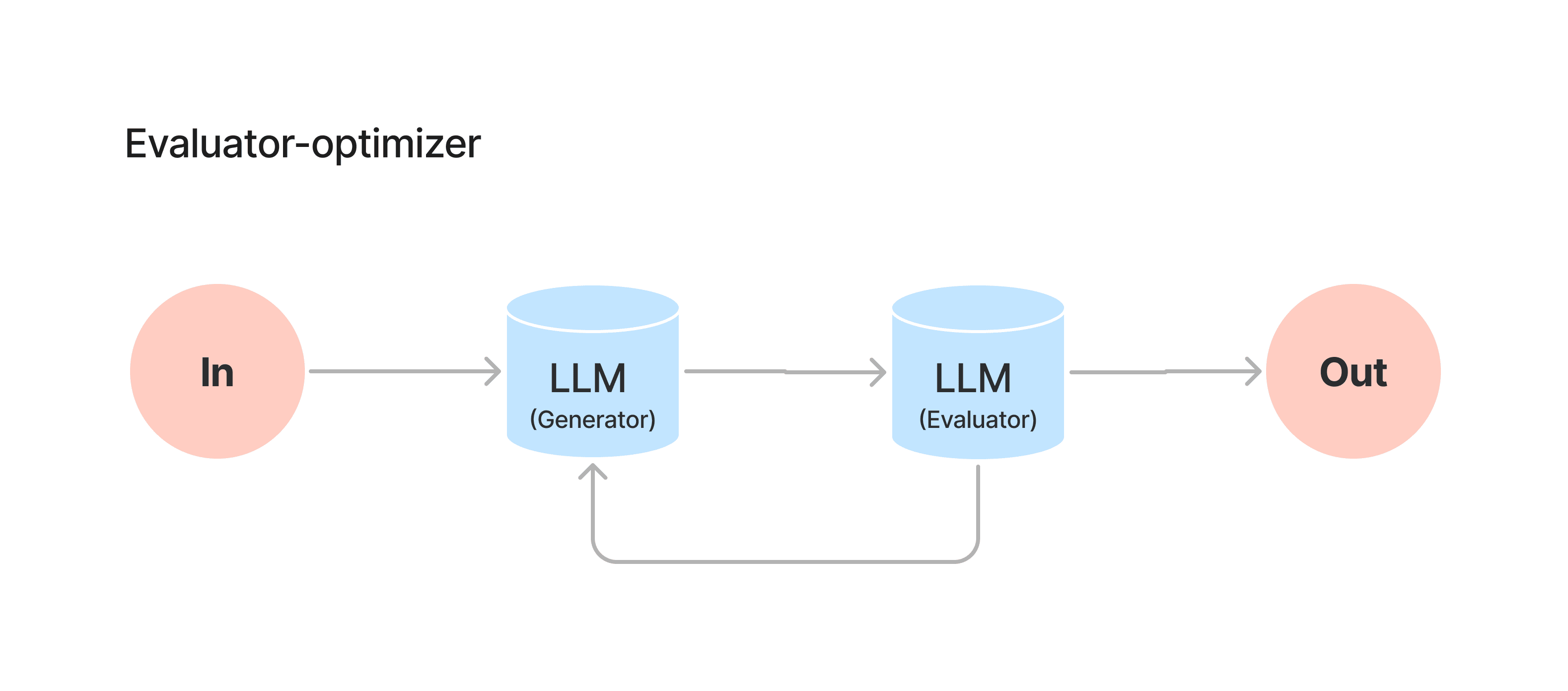

- Evaluator-Optimizer - A workflow where a generator LLM produces an output, which is evaluated by an evaluator LLM. If rejected the elevator informs the generator LLM to try again until the evaluator approves of the response which is returned to generate a higher-quality final output.

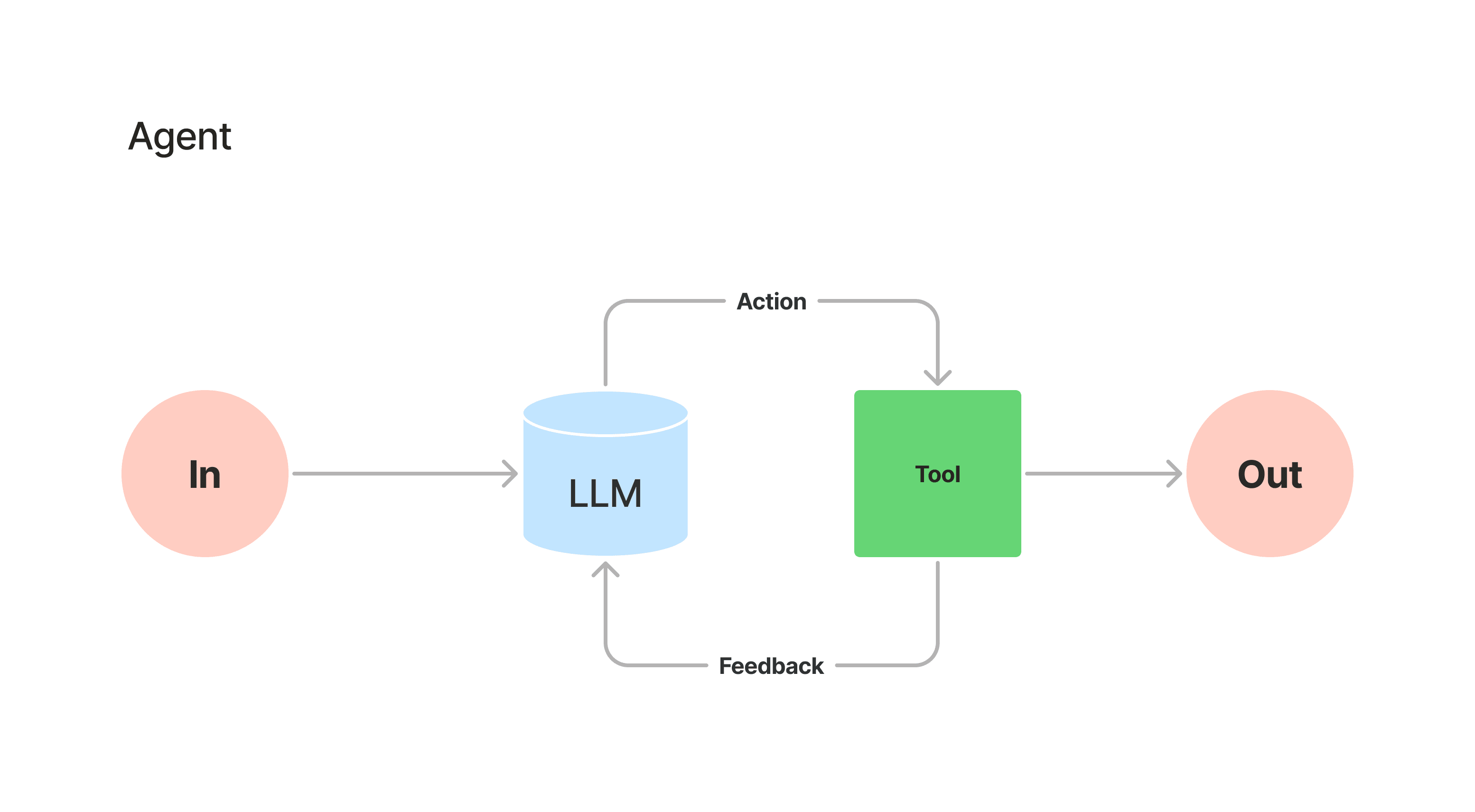

Agent Systems

Unlike the structured workflow approaches, agents operate with greater autonomy. They dynamically adapt their actions and responses based on environmental feedback. Rather than predefined steps that create a clear visual architecture like the workflows above, they continuously assess, adjust, and make decisions in real time. A process that allows for more flexibility and independent problem-solving.

Future of AI Agents

The evolution of generative AI into agentic frameworks represents the next pivotal step in AI solutions, bridging the gap between rigid workflows and fully autonomous systems. As these systems advance, the distinction between structured and adaptive automation will become more blurred unlocking more dynamic, efficient, and scalable solutions than what we have today. Understanding the core components and levels of autonomy is key to unlocking their full potential. Those who embrace and refine these systems now will be at the forefront of AI-driven work, redefining how we operate and innovate.